I’ve used a number of GNU/Linux distributions over the years, some more than others. This weekend, I’ve apparently been overcome by nostalgia and revisited a distribution I haven’t used in years.

Early beginnings

When I first started getting serious about leaving Windows in the late 90’s, I used RedHat 5.2 as my primary OS. Release after release went by, and I was convinced it was continuing to lose its charm. There was a limited number of packages available and I regularly had to hit rpmfind.net to get things I wanted installed. First, RedHat 7 shipped with a broken compiler. Next, RedHat 8 shipped with Bluecurve which seemed like a crippled mash of GNOME and KDE, so getting certain things to compile either way could be quite difficult. Given the small repositories, compilation was frequently necessary. There had to be a better way…

I occasionally glanced over Debian’s way, but at the time it was too archaic. I could not (for example) understand how anything with such a primitive installer could be any good. eg. Why would one ever want to manually specify kernel modules to install? Why not at least have an option to install the lot and just load what’s required like other distributions did? Then once you got the thing installed and working, packages would be quite old. You could switch to testing, but then you don’t have a stable distribution any more. The whole distribution felt very server/CLI-orientated, and at the time (having not so long ago came from a Win9x environment) it was not easy to appreciate. It also lacked a community with a low-barrier entry – eg. IRC and mailing lists instead of forums.

Enter Gentoo. It seemed to always make the headlines on Slashdot, so I had to see what all the fuss was about. Certainly it was no snap to install, but the attention to detail was incredible. The boot-splash graphics (without hiding the important start-up messages as other distros later tried to do) and automatic detecting of sound and network cards was a sight for sore eyes. The LiveCD alone was my first go-to CD for system rescues for many years due to it’s huge hardware support, ease of use, high-resolution console and small boot time (as it didn’t come with X). Further, everything was quite up-to-date – certainly more so than basically anything else at the time. Later I came to realise the beauty of rolling releases and community.

So I compiled, and compiled, and compiled some more. My AMD Duron 900MHz with 512Mb and 60Gb IDE HDDs seemed pretty sweet at the time, but compiling X or GNOME would still basically require me to leave the machine on overnight. It didn’t help any that I only had a 56k dial-up connection. Sometimes packages would not compile successfully so I would have to fix it and resume compilation the next morning (although the fix was only ever a Google search away). With the amount of time I invested into learning Gentoo GNU/Linux instead of studying my university courses, it’s amazing I managed to get my degree. I even went so far as to install Gentoo on my Pentium 166MMX with 80Mb RAM laptop (using distcc and ccache).

Later during my university work-experience year, I used Gentoo on my first-ever built production web-server. Another “senior” system administrator came along at one point to fix an issue it had with qmail, and he was mighty upset with me to see Gentoo. Not because it was a rolling-release (and I’m pretty sure Hardened Gentoo didn’t exist at the time either), but because he didn’t know the rc-update command (he expected RedHat’s chkconfig) and was only familiar with System V and BSD init scripts. I think he would have had a heart attack if he later saw Ubuntu’s Upstart system (which has also been quite inconsistent across releases over the years)!

During my final university year, I used Gentoo again for a team-based development project. For years it seemed so awesome that I could hardly bare to look at another distro. The forums were always active, and the flexibility the ports-based system provided was unmatched by any other GNU/Linux distribution. However it was at this time Ubuntu starting making Slashdot headlines – perhaps moreso than Gentoo. Whilst I was midly curious and read the articles, I didn’t immediately bother to try Ubuntu myself. After all, it was just a new Debian fork, and I knew about Debian. The next year I attended the 2005 Linux.conf.au in Canberra, still with my trusty P166 laptop running Gentoo I might add. After arriving at the hallway where everyone was browsing the web on their personal laptops with the free wifi, it was immediately obvious I was the odd one out. Not just because my computer was ancient by comparison, but because I was just about the only person that *wasn’t* running Ubuntu. I think I literally saw just one other laptop not running Ubuntu – I simply could not believe it. I had to see what the fuss was about.

A few days after the conference, I partitioned my hard disk and installed Ubuntu Warty. Hoary had just been released, but I already had a Warty install CD and I was still limited to dial-up – broadband plans required a one-year contract and installation fees, and I was frequently moving around. Unlike Debian, the Warty installation was quick and simple. The community was quite large, and while it seemed smaller than Gentoo it was growing rapidly. Because user-friendly documentation actually existed, I felt more comfortable using the package management system. I didn’t need to use the horrible dselect package tool either. The packages were quite up-to-date; perhaps not on the same level as Gentoo, but close enough that any differences didn’t matter. Ultimately I was obtaining all the notable benefits Gentoo had offered, without the downside of huge downloads and slow compilations. By the end of the year I wasn’t using Gentoo on my personal machines any longer.

Side-note:

Seemingly inspired by Ubuntu, Debian has over the years since made significant improvements – shorter release cycles, more beginner-friendly and user-friendly support pages and wikis (although no forms so far), a much easier and efficient installer, etc. Additionally, because of my familiarity with the Debian-based Ubuntu, I later found myself far more comfortable using Debian. I might even go so far as to say enjoying Debian. Indeed, my home server is a Debian box running Xen, where Ubuntu doesn’t even support Xen in Dom0. Further, unlike Ubuntu, Debian hasn’t changed the init script system every six months. Each release does things the way you would expect, whereas Ubuntu frequently “rocks the boat”, requiring more time going through documentation trying to figure out how new releases work. Being a free software supporter, I also don’t appreciate Ubuntu recommending me to install the proprietary Flash player in my Firefox install, or offering to automatically install nVidia proprietary drivers. If I need to use proprietary software, I want it to be a very conscious decision so I know exactly which areas of my OS are tainted. In some ways, Debian is stealing back some of the thunder it lost to Ubuntu – at least in my book.

Life after Ubuntu

The installation I have been using on my personal desktop was a Ubuntu install, upgraded with each release to the current 10.10 (Maverick) from 7.10 (Gutsy), occasionally installing 3rd party PPAs and what not over the years. Whilst I had upgraded my file-systems to use ext4, I knew I would need to reformat them from scratch to get the most performance benefits from them. There was probably a ton of bloat on the system (unused packages, hidden configuration files and directories under $HOME, etc.) and many configuration files probably differed largely from the improved defaults one would get on a fresh modern install. As this weekend was a day longer than usual due to Australia Day, ultimately I decided it was a good time to reinstall.

What to install however? Ubuntu again? I was seriously getting tired of it. It just didn’t feel interesting any more, although it did the job. Additionally, Ubuntu doesn’t strip the kernel of binary blobs like other distributions do (including Debian starting with Squeeze) – another cross in my book. No, it was time for a change. Debian might have been the obvious choice – particularly since Squeeze was released recently making it fresh in new. However I was already somewhat familiar with Squeeze (I installed it on my Asus EeePC 701 netbook last week) and it still felt a bit dated in some areas over Ubuntu. I’m also conscious that the next version probably won’t be released as stable for at least another year or two. Further, I don’t appreciate how Debian considers lots of the GNU *-doc packages non-free. I want those installed, but I hate the thought of enabling non-free repositories just to get them. Perhaps I could find something completely free instead?

Completely free GNU/Linux options

With that in mind, I did a little bit of investigation into completely free GNU/Linux distributions (as per the FSF guidelines). Let’s look at the list:

Blag

Based on Fedora. I don’t like Fedora, but I might be willing to look past that aspect. I do however need a distribution that is kept up to date. According to Wikipedia, “the latest stable release, BLAG90001, is based on Fedora 9, and was released 21 July 2008.”. In all likeliness, this would suggest that the stable release hasn’t seen security updates in a long time (Fedora 9 dropped support some time ago). The Blag website does however list a 140k RC1 version which is based on Fedora 14 (it’s not clear when this was posted), but other parts of the website such as the Features section still reference Fedora 9 as the base distribution. It would seem the latest Blag RC version has only made an appearance after well over 2 years, and it’s not even marked as stable.

Further, I’m a little skeptical of installing a distribution that is basically the same as another distribution with bits stripped out to make it completely free – there is always the chance that something was missed, or a certain uncommonly-installed package will malfunction with part of a dependency removed. On the face of it, Blag fills me with doubts.

Dragora

Something new, and not based on any other distribution. Sounds intriguing. Just the coolness factor of using something unlike anything I’ve ever used before gives this bonus points, if only for the fact that the guys doing this must be serious due to doing so much work (eg. new init system, new package management system, installer, documentation, etc.). The Wikipedia page is light on details and the project website redirects to a broken wiki with the text “Be patient: we are working in the content of the new site. – Thanks”. There is a link to the old wiki at the bottom of the page, but it redirects to a Spanish page by default. Fortunately there is an English version there you can select, and it looks mostly as complete as the Spanish version.

It’s nice to see this project has its own artwork and a few translations. There is also a download wiki page which indicates that there is a regular release cycle. Although the wiki doesn’t indicate which architectures are supported, at least two of the mirrors provide x86_64 downloads. SHA1 checksums and GPG signatures of the images are accounted for – always a good sign to see integrity considered. As an aside, apparently Arch GNU/Linux doesn’t verify package signatures downloaded via its package management system which is why I would never consider using it.

Dynebolic

From the FSF’s description, Dynebolic focuses on audio and video editing. I actually do intend to purchase a video camera at some point this year so I can start uploading videos, however for now I have no need for such tools.

gNewSence

The distribution Richard Stallman apparently uses as of late. I suspect that since Stallman now owns a MIPS-based netbook, he found gNewSense was able to support the configuration better than Ututo (which he used previously). Unfortunately I use an Intel i7 with 6Gb of RAM and a GTX480 graphics card with 1.5Gb of RAM – I need a 64-bit distribution to address all that memory, and gNewSense doesn’t support the x86_64 instruction set. The last stable release (v2.3) was 17 months ago too, according to Wikipedia at the time of writing.

Musix

Based on Knoppix. Isn’t Knoppix a blend of packages from various distributions and distribution versions? Last I checked (admittedly many years ago) it didn’t look like something easy to maintain. Musix also apparently has an emphasis on audio production, which would explain the name. As mentioned previously I don’t have a requirement for such tools.

Trisquel

I’ve actually used this at work for Xen Dom0 and DomU servers (although it’s surely not the best distribution for Dom0 – I had to use my own kernel and Xen install), and it works quite well. Basically, the latest version is just a re-badged Ubuntu 10.04 LTS with all the proprietary bits stripped out. Unfortunately, in many ways it’s a step back from what I was already using for my desktop – older packages than Ubuntu 10.10, a far smaller community and much less support/resources (although 90+% of whatever works for Ubuntu 10.04 will work for the latest Trisquel (4.0.1 at the time of writing). There is basically no compelling reason for me to use Trisquel on my personal desktop as my primary OS at this time from what I can see – I would probably be better off installing Debian Squeeze with non-free repositories disabled.

Ututo

Based on Gentoo. Sounds good, right? However upon further investigation, it’s clear that the documentation is entirely in Spanish! This may not be a problem for Stallman, but I don’t speak (or read) Spanish.

Venenux

Apparently this distribution is built around the KDE desktop. I personally don’t like KDE and don’t run it, so it doesn’t bode well so far. Heading on over to the Venenux website I once again fail to find any English, so scratch Venenux off the list.

So that’s it – the complete list of FSF-endorsed GNU/Linux distributions. Of all of the above, there is only one worthy of further investigation for my purposes – Dragora.

Dragora on trial

I downloaded, verified and burnt the 2.1 stable x86_64 release, booted it, and attempted an installation.

As is the case with the website, during installation I faced quite a bit of broken English – another potential sign that the project doesn’t have much of a community around it. However I wasn’t about to let that get to me. Perhaps it did have a large community, but simply not a large native-English-speaking community? Besides, English corrections aren’t a problem – I can take care of that by submitting patches at any time should upstream accept them.

The next thing I noticed was that my keyboard wasn’t working. I unplugged it and plugged it back in again. Pressed caps-lock a few times but didn’t see the caps-lock LED indicator appear. Clearly, the kernel wasn’t compiled with USB support (or the required module wasn’t loaded). Luckily, I managed to find a spare PS/2 keyboard which worked.

Like Gentoo, the first step involved manually partitioning my hard drives. I like to use LVM on RAID, and fortunately all the tools were provided on the CD to do that. It wasn’t clear if I needed to format the partitions as well, so I formatted over 2Tb of data anyway. Unfortunately during the setup program stage, the tool formatted my partitions again regardless of checking the box for it not to do so, which wasted a lot of time.

While waiting, I looked over some of the install scripts and noted that they were written in bash. All comments looked to be in Spanish, even though all installer prompts were in English. I found this both strange and frustrating. I also elected to install everything, as that was the recommended option. Lastly, I attempted to install Grub… and things didn’t go so well from that point onwards. As far as I could tell, the kernel was not able to support a MDADM+LVM2 root file-system (/boot was on a RAID1 only MDADM setup which I know normally works). It looked like I would either need to figure out why the kernel wasn’t working, or reformat everything and not use my 4-disk RAID5 array to host a LVM2 layout containing the root file-system. At this point my patience had truly ran out and I decided it was time to try something else. I also never managed to figure out how the package management system would work, as that was one section missing from the wiki documentation.

An old friend

So what did I end up with? Gentoo! Not exactly a completely free-software distribution… in fact I was shocked by just how much proprietary software Gentoo supports installing. The kernel won’t have all the non-free blobs removed either, however I suspect I don’t need to compile any modules that would load them. Gentoo also is feeling very nostalgic to me right now.

Since the last time I used it, there have been some notable changes and improvements. For example, stage1 and stage2 installations are no longer supported. Previously, I only ever used stage1 installs so this felt a bit like cheating. Additionally when compiling multiple programs at once, package notes are now displayed again at the end of compilation (regardless of success or failure of the last package) so you actually have a chance to read them. Perhaps the most important change however is that now source file downloads occur in parallel with compilation in the background (which can be easily seen by tailing the logs during compilation) – this saves a lot of time over the old download, compile, repeat process from years ago.

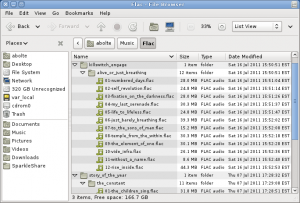

All USE flags still aren’t listed in the documentation, and I have ran into some circular dependency issues by using the doc USE flag (which is apparently common according to my www searches). I’ve had to add 6 lines to the package.accept_keywords and package.use files to get everything going. However, now I’m all set up. I’ve compiled a complete GNOME desktop with the Gimp, LibreOffice and many others. Unlike the days of my Duron which had to be left on overnight, my overclocked i7 950 rips through the builds – sometimes as quickly as they can be downloaded from a local mirror over my ADSL2+ connection.

Although the Gentoo website layout has largely been unchanged over the years, and some of the documentation is ever-so-slightly out of date, I get the sense that Gentoo is still a serious GNU/Linux distribution. I didn’t encounter any compilation issues that couldn’t be quickly worked around and it feels very fast. I still use Ubuntu 10.10 at work and Debian Squeeze on my netbook and home server, but going back to ebuilds still feels very strange and somewhat exciting. If only Gentoo focused more on freedom it would be darn near perfect.